The Real Cost of AI Systems in 2026: Scaling Is Not Cheap

Artificial intelligence now powers a significant portion of the digital economy — from SaaS platforms and automation tools to advanced analytics and generative systems. Yet while public attention focuses on model performance and impressive demos, a more important question often remains in the background: what does it actually cost to scale AI systems in real-world conditions?

In 2026, AI is no longer an experiment. It is infrastructure. And infrastructure carries operational, energy, and capital costs.

Visual illustration: InfoHelm

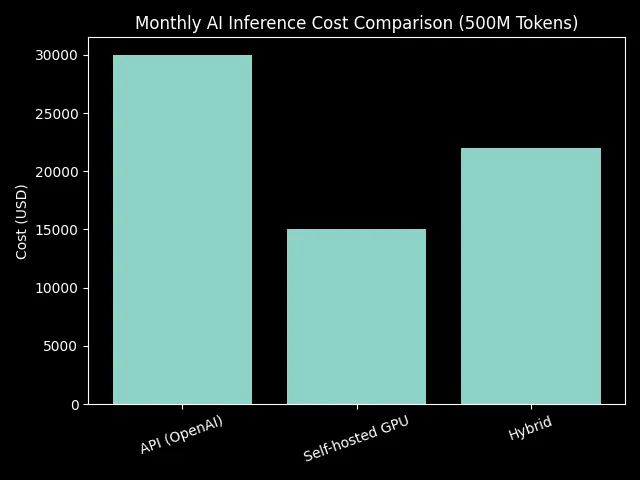

Inference API Cost Benchmark

Most AI applications today rely on external API services. The cost per million tokens may appear low at first glance, but as request volume increases, expenses grow rapidly.

Estimated monthly costs for a system processing approximately 500 million tokens per month:

| Provider | Cost per 1M Tokens (USD) | Estimated Monthly Cost (500M) |

|---|---|---|

| OpenAI GPT-4.x | 0.06 | 30,000 USD |

| Anthropic Claude | 0.05 | 15,000 USD |

| Google Gemini | 0.04 | 16,000 USD |

| Self-hosted GPU* | ~0.01 | ~5,000 USD |

*Self-hosted estimate includes direct infrastructure costs only, excluding team and maintenance expenses.

Chart 1: Monthly inference cost comparison at 500 million tokens.

As user volume increases, the external API model can generate monthly expenses in the tens of thousands of dollars.

Cloud Infrastructure and GPU Dependency

Companies transitioning to their own infrastructure face different challenges. GPU instances remain the core resource.

Typical market pricing:

| Instance Type | Hourly Cost (USD) | Estimated Monthly (200h) |

|---|---|---|

| GPU A100 | 3.00 | 600 USD |

| GPU V100 | 2.50 | 500 USD |

| CPU Only | 0.40 | 80 USD |

However, scaling requires more than a single instance:

- load balancing

- reserved capacity for peak traffic

- monitoring and log analysis

- backup and security systems

The real infrastructure cost is often higher than initial estimates.

The Shift from External APIs to Self-Hosted Infrastructure

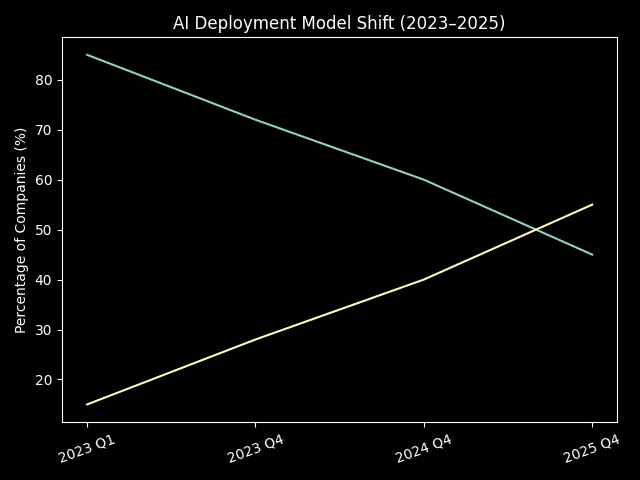

Industry trends show a clear movement toward hybrid or self-hosted deployment models.

Estimated distribution by implementation model:

- 2023 Q1: 85% external API / 15% self-hosted

- 2023 Q4: 72% / 28%

- 2024 Q4: 60% / 40%

- 2025 Q4: 45% / 55%

Chart 2: Deployment model shift in AI systems from 2023 to 2025.

Within two years, the balance has nearly reversed. As workload increases, companies seek more sustainable long-term cost structures.

AI SaaS Margins: The New Reality

Unlike traditional software — where marginal costs are near zero — AI systems carry a direct cost per request. This means:

- user growth does not equal linear profit growth

- scaling requires precise cost optimization

- margins remain under constant pressure

As a result, investment capital in 2026 increasingly favors infrastructure and hardware providers, while application-layer companies must carefully balance performance and cost efficiency.

Conclusion

The numbers clearly show that AI economics is not purely a technological issue — it is a financial one.

Scaling AI systems requires disciplined resource management, deep understanding of token economics, and strategic infrastructure planning. In the coming years, competitive advantage will depend not only on model quality, but on cost structure efficiency.

Note: This article is educational and informational.